The Demo and the Production Gap

A sales engineer opens a chat window and types: "Remember that I prefer metric units." The assistant says, "Got it." Five minutes later, in the same demo, it answers a follow-up question correctly using metric units. The room nods. The deal moves forward.

Three months later the same product is in production. A user updates their preference. The next response uses the old preference and the new one in the same paragraph. A second user — different account entirely — asks an unrelated question and receives an answer that quietly references the first user's preference. The support team escalates. The vendor explains that the system "retrieves relevant context" and that "occasional inconsistencies" are being addressed in the next release.

The system is doing exactly what it was built to do. The system was never built to remember. It was built to retrieve. The conflation between the two is one of the more expensive misunderstandings in enterprise AI in 2026, and it has a name. The name is RAG.

What RAG Actually Is

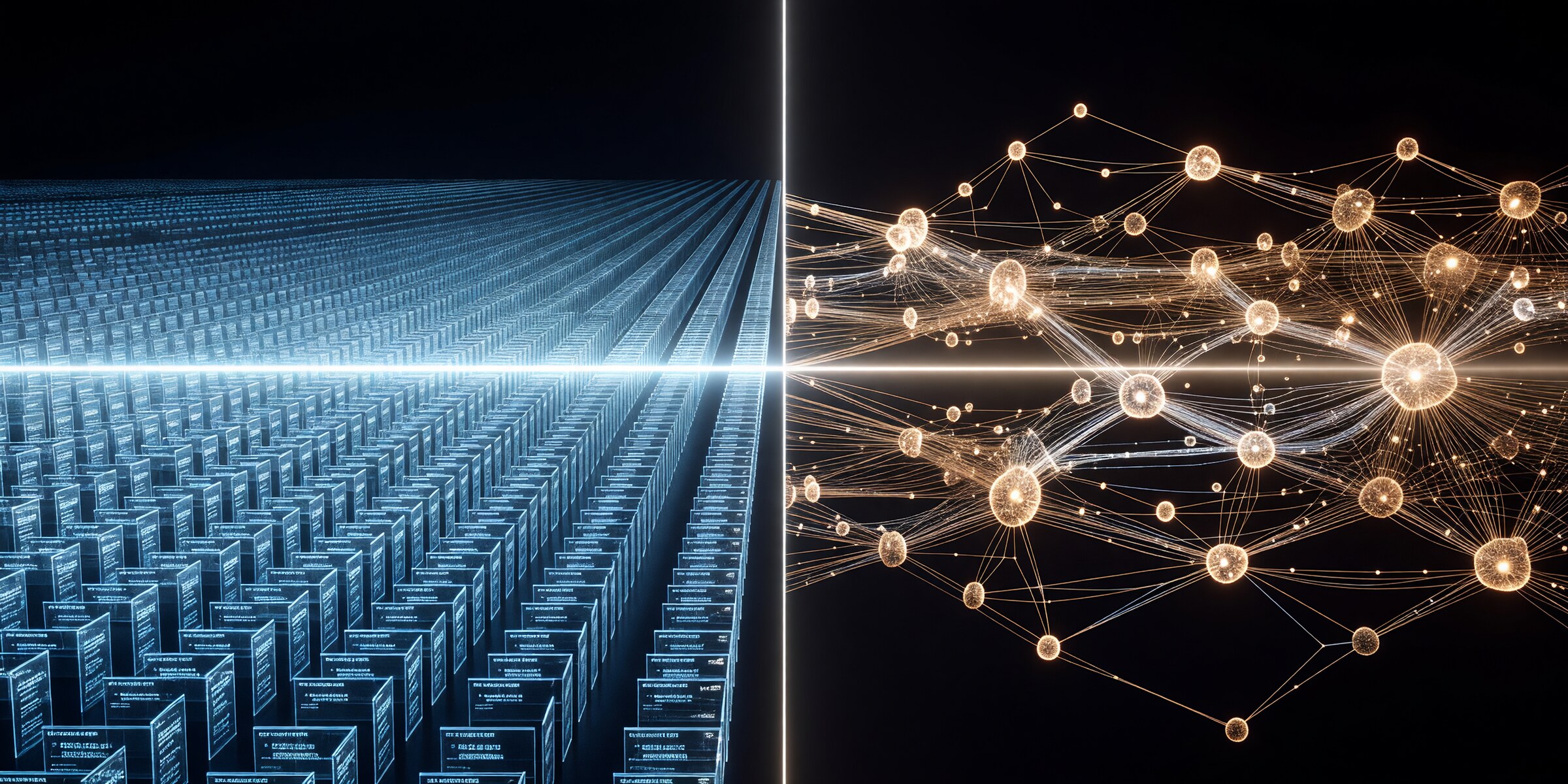

Retrieval-Augmented Generation — RAG — is a pattern, not a memory system. The pattern is: when the model needs to answer, search a vector store for the few document chunks most semantically similar to the query, paste them into the prompt, and let the model do the rest.

It is genuinely useful. It grounds the model in your documents. It reduces certain classes of hallucination. It scales reasonably well. For static reference material — product manuals, regulatory text, knowledge-base articles — it is the right tool, and it is now the standard tool.

What it is not is state. RAG is read-only. There is no API for the model to write back. There is no notion of "this fact is now true and that earlier fact is no longer true." There is no record of what the model learned during the conversation, no way to update preferences, no mechanism for one session to build on the next. Each query is answered as if the system woke up that morning with no history.

The 2026 critique, which has now coalesced across academic and industry voices, condenses to a single line that travels well: RAG is the floor, not the ceiling. It is what you start with. It is not what production agents need.

What Memory Actually Is

Cognitive science has known for a long time that memory is not a single thing. There is semantic memory — general facts about the world, the kind of thing a textbook holds. There is episodic memory — specific events with context, sequence, and outcomes, the kind of thing a personal diary holds. There is procedural memory — skills and habits that change behaviour without being consciously retrieved.

RAG primarily targets semantic memory, and only the parts of it that someone has bothered to write down. Episodic and procedural memory — the kinds that distinguish an agent that has learned from one that has only been informed — are absent.

A working memory system, in the sense the 2026 literature now uses the term, has five things RAG does not:

- Write paths. New information enters the store under control. Conflicts are detected. Old facts are superseded, not silently overwritten.

- Reflection and consolidation. Sequences of episodes are distilled into reusable patterns — the agent's equivalent of "I have noticed that this user always asks about latency before throughput."

- Decay and forgetting. Some facts have a shelf life. A working memory system knows the difference between "this is current" and "this was true three quarters ago."

- Governance. Provenance, scoping, audit, post-deletion verification. The same primitives every regulated knowledge domain has had for decades.

- Runtime learning. The next session is not the first session. The agent's behaviour changes because of what it has remembered, not just because of what it can retrieve.

The shortest way to put it: RAG provides vector recall. Memory provides stateful recollection. Recall is passive — the same query returns the same chunks regardless of who is asking, what has changed, or what was learned last time. Recollection is active — the system maintains and updates an evolving model of what it knows, who said it, and when it stopped being true.

How the Gap Shows Up in Production

The amnesia symptoms are familiar to anyone who has run a non-trivial AI system for more than a quarter:

- Preference drift. A user changes a setting. The model retrieves both the old version and the new version on the next query. They are both "relevant" by cosine similarity. The output reflects both.

- Cross-session forgetting. The user explained the project's constraints last week. This week they explain them again. The agent does not say "you mentioned this before." It does not know.

- Cross-user bleed. A retrieval pulls in a chunk from another user's conversation because the embeddings happen to be close. The new user receives an answer that quietly references information they were never given. (A 2026 study of multi-agent workflows on clinical and workplace datasets measured 57–71% cross-user contamination in benign tasks, and 84% of the wrong answers looked correct.)

- Stale facts served as current. A document was indexed in March. The fact it asserts changed in April. The vector index does not know. The model presents the March answer with the same confidence it would present today's.

- Multi-hop collapse. The query requires connecting two pieces of information that are not semantically similar to each other but are both relevant. RAG retrieves one of them, or retrieves both as unrelated chunks. The synthesis fails.

- Token bloat from re-contextualization. Each new session begins by re-explaining context. A measurable fraction of every enterprise AI invoice — multiple recent benchmarks place it between 60 and 80 percent on long-running workflows — is the cost of agents being told things they should already remember.

The first article in this series, The Rediscovery Tax, put a label on this cost at the organizational level. RAG-as-memory is the technical mechanism by which the rediscovery tax is paid every time an agent is invoked.

Five Hallucination Patterns That Come from RAG-as-Memory Specifically

Hallucination is often discussed as if it were a single phenomenon. It is not. When RAG is used as memory, five distinct failure modes appear, each with its own signature:

- Context pollution. Multiple retrieved chunks contradict each other. The model resolves the contradiction by inventing a synthesis that satisfies neither.

- Temporal confidence inflation. A retrieved chunk has no validity window. The model presents it as current because it was retrieved, and because nothing in the prompt says otherwise.

- Snippet synthesis failure. The top-k retrieved chunks are accurate fragments. The narrative the model builds from them is not — the connective tissue is invented.

- Provenance loss. The model cannot distinguish between "this is from the verified internal handbook" and "this is from a draft document an intern uploaded last quarter." Both arrive in the prompt as text.

- One-shot personalization failure. The relevant fact about the user is episodic — something that happened, not something that is "about" the topic. Cosine similarity does not surface it. The agent answers as it would for any user.

The legal-AI hallucination rate — measured at 17 to 33 percent on commercial systems with retrieval-augmented architectures — is in significant part this pattern. Retrieval reduced one class of hallucination and exposed another.

What 2026 Has Built to Close the Gap

The good news is that the gap has been visible long enough that an actual category of memory systems exists in production now, distinct from the vector-store-as-memory pattern. The leaders are worth knowing by name, because the architectural choices each one makes illuminate what memory actually requires.

- Mem0 treats memory as user/agent/session-scoped atomic facts and preferences. It uses an LLM pipeline at write time to extract, deduplicate, and update — so the second time a user says "I prefer metric," the store does not end up with two entries. Hybrid vector + graph + key-value backing. It has the broadest production adoption of the current cohort.

- Zep, built on the open Graphiti engine, treats memory as a temporal knowledge graph. Episodes are first-class. Each fact carries a "valid from" and "valid until," and contradictions are handled by supersession rather than deletion — the old version remains queryable, marked as superseded. This is what bi-temporal modelling looks like in production.

- Letta (the project formerly known as MemGPT) treats the agent itself as the memory manager. Persistent core blocks (persona, goals) live in the prompt at all times; recall storage holds searchable history; archival storage holds the long tail. The agent uses tools to page memory in and out of its own context.

- Cognee focuses on building structured knowledge graphs from unstructured and multimodal sources — closer to a memory-aware ingestion pipeline than a conversational store, but powerful for institutional knowledge.

- MemPalace takes the opposite philosophical position: store everything verbatim in a spatially organized hierarchy and let retrieval find what matters. The bet is that aggressive LLM-side extraction loses fidelity.

- A-MEM is a research direction (NeurIPS 2025) in which the agent itself organizes memory in a Zettelkasten-inspired network of linked atomic notes that it grows and revises.

These are not minor variations on RAG. They make a different architectural bet: that memory is write-then-read, with serious work happening at the write step — extraction, deduplication, conflict resolution, validity assignment — so that the read step can be fast, multi-strategy, and trustworthy.

The Five Patterns Becoming Table Stakes

Watch the 2026 cohort long enough and a convergence becomes visible. Five patterns are appearing across systems that were originally designed independently:

- Write-then-read. Heavy lifting at ingest time — extraction, structuring, reflection — so reads are fast and combine multiple strategies (semantic vector + graph traversal + temporal + keyword + rerank).

- Events as first-class citizens. Episodes — what happened, when, and what changed — are stored as objects, not just as chunks of text.

- Hybrid graph + vector. Pure-vector storage is no longer state of the art. Graphs handle relations, temporal queries, and precise lookups; vectors handle fuzzy semantic similarity. Most leaders combine both, and many add a key-value or relational layer for structured fields.

- Bi-temporal validity and contradiction handling. Two timestamps per fact — when it became true in the world, and when the system learned about it — with supersession rather than overwrite. The audit trail is the record.

- Reflection layers. Periodic consolidation of episodes into higher-level patterns. The pattern descends directly from the 2023 Generative Agents paper and now appears in some form in nearly every serious production system.

Notice what is not yet table stakes: governance. The audit, scoping, role-bounded access, and human-in-the-loop oversight that mature regulated industries take for granted are still bespoke in most memory systems. This is the gap we wrote about in The Trust Chain Problem, and it is the gap The Phase 3 Problem tracked into multi-agent territory. RAG-as-memory is the foundation those gaps sit on. Replacing it with actual memory is the precondition for closing them.

What This Means for Buyers

If your organization is evaluating an "AI with memory" offering in 2026, the question to ask is not whether the system has memory. Almost everyone now claims it does. The questions to ask are about the shape of that memory.

- Where is the write path, and what controls it? A system without write authorization is not memory. It is a notebook anyone can scribble in.

- How are contradictions resolved? Overwrite, supersession, or "both stay and we hope the prompt sorts it out"? Only the second answer is durable.

- Is there a notion of validity time, distinct from creation time? If not, every fact is treated as current. Reality does not work that way.

- What is the unit of forgetting? A row, a document, a fact, a relationship? And is forgetting verifiable, or is it a soft flag that retrieval still occasionally returns?

- Who can see what? Memory without scoping is memory waiting to leak.

- Where does the agent end and the memory system begin? Agent-managed memory (Letta, A-MEM) trades latency for autonomy. System-managed memory (Mem0, Zep) trades autonomy for operational clarity. Neither is wrong. The choice should be conscious.

In production, these systems rarely stand alone. The pattern that wins is layered: a thin agent-side scratchpad for working state, a system-side memory store for governed institutional knowledge, RAG for static reference material that does not need to evolve. Calling the whole stack "memory" is fine. Calling RAG by itself "memory" is not.

The Conversation We Are Already Having

The series this article belongs to has been unusual in being honest about which problem the industry is actually solving for. The Rediscovery Tax named the cost of stateless agents. The Trust Chain Problem named the governance vacuum at the institutional layer. Shadow Memories named what happens when memory accumulates invisibly inside the organization. The Phase 3 Problem named what happens when those memories start reaching across agents.

Each of those articles assumed something that this one makes explicit: the systems most enterprises currently call "memory" are not memory. They are retrieval. The mismatch is the source of the rediscovery tax, the trust chain gap, the shadow memory accumulation, and the multi-agent contamination cascade. Closing the mismatch is not optional. It is the work of the next two years of enterprise AI.

We describe this from practice, not theory. We build systems that take the distinction seriously, and we have watched what happens to operational cost and trust posture when the foundation underneath the agents stops being a vector store with a confident voice.