Your AI assistant confidently refactors a critical service. It explains that it's following the architecture patterns established in previous sessions — the ones where you migrated away from the monolith. It references specific decisions: the event-driven pattern, the separation of read and write models, the caching strategy.

There's just one problem. The caching strategy it remembers was abandoned two months ago after it caused a data consistency issue in production. The AI doesn't know that. Its memory says it's current. Nothing in the system flagged it as stale.

The refactoring ships. The bug reappears. And you spend a day debugging something that was already debugged — not because the AI forgot, but because it remembered something it shouldn't have trusted.

This is the trust chain problem. And as AI memory systems get better at remembering, it's going to get worse.

Remembering Wrong Is Worse Than Forgetting

In The Rediscovery Tax, we explored what happens when AI can't remember anything between sessions. The cost is real — re-orientation, repeated mistakes, knowledge that never compounds. The industry heard the message. The AI memory race is now fully underway.

Mem0 has 48,000 GitHub stars. Zep's Graphiti builds temporal knowledge graphs. Letta runs persistent agent runtimes. MemPalace went viral in April 2026 with its spatial memory architecture. Dozens of startups are attacking the problem from every angle.

But in the rush to give AI a memory, almost everyone is skipping a question that every other knowledge-bearing institution learned to ask centuries ago:

How do you know the memory is correct?

An AI that forgets is frustrating. An AI that confidently acts on stale, corrupted, or conflicting knowledge is dangerous. The amnesia problem had a clear cost. The trust chain problem has hidden liabilities.

The Governance Gap

We surveyed the leading AI memory systems, dug through their documentation and architectures, cross-referenced industry reports. The pattern is consistent: memory is treated as a storage problem, not a governance problem.

That last number bears emphasis. We examined eight of the most prominent AI memory frameworks. None provide immutable provenance tracking. None offer cryptographic integrity verification. None implement the kind of audit infrastructure that a first-year accounting student would consider table stakes.

Here's what's missing:

- Provenance. Where did this memory come from? Was it extracted from a document, inferred by an AI, stated by a user, or synthesized from multiple sources? When you retrieve a fact, can you trace its origin? In most systems: no.

- Temporal validity. When was this true? Is it still true? Was it superseded? Zep's Graphiti is a notable exception here — it tracks fact windows and invalidation timestamps. Most systems treat knowledge as eternally valid once stored.

- Conflict resolution. When two memories contradict each other, which one wins? By what rule? Most systems don't even detect the conflict, let alone resolve it. The AI just retrieves whichever chunk scores highest on similarity — regardless of which is accurate.

- Governance. Who decides what gets remembered? What's the retention policy? Can a memory be retracted? Who audits the memory for accuracy over time? These aren't features. They're the absence of a framework.

Why This Is Becoming Urgent

When AI memory systems were experimental and small-scale, the governance gap was academic. A developer playing with a memory layer in a side project could tolerate stale facts and missing provenance. The stakes were low.

That era is ending. Organizations are now deploying persistent AI memory at institutional scale — knowledge bases that span teams, projects, and years. When the memory is one developer's notes, a bad recall is an inconvenience. When the memory is an organization's institutional knowledge, a bad recall is a decision made on false premises.

And the attack surface is growing. One in four organizations has already been the victim of data poisoning. Shadow AI — employees using AI tools outside governed channels — has reached 59% prevalence. Now imagine those ungoverned AI interactions generating persistent memories that feed into future decisions. The contamination compounds silently.

We're also starting to talk about "AI readiness" — measuring how prepared organizations are to work with AI effectively. But the conversation is almost entirely about tool adoption and workflow integration. Can your team use AI? Can your data feed into AI systems? Can your website even communicate with AI agents?

These are necessary questions. They are not sufficient. AI readiness without trust infrastructure is like digital transformation without cybersecurity. You've adopted the tools. You haven't secured the foundation.

When 59% of employees use AI tools outside governed channels, every interaction is potentially generating persistent knowledge — context, preferences, decisions — that feeds back into future AI behavior. Without governance, you don't know what your organization's AI "remembers." You don't know where those memories came from. You can't verify their accuracy. And you can't retract them when they're wrong.

This is not a theoretical risk. It's the natural consequence of adding memory to systems that already operate without adequate oversight.

What Trust Actually Requires

Every mature knowledge-bearing domain — finance, medicine, law, supply chain management — solved the trust problem before it solved the efficiency problem. They didn't start by asking "how do we store more information faster?" They started by asking "how do we know what we store is accurate, current, and verifiable?"

The principles are not exotic. They're well-established:

- Provenance as first-class metadata. Every piece of knowledge needs an origin story: where it came from, who or what contributed it, when it was recorded. Financial ledgers don't just record transactions — they record who authorized them. Medical records don't just note diagnoses — they note which clinician made them and based on what evidence.

- Temporal awareness as infrastructure. Knowledge isn't timeless. It has a valid-from and a valid-to. The caching strategy that was correct in January may have been deprecated in March. A system that treats both the January and March versions as equally current isn't a memory — it's a contradiction engine.

- Integrity verification. Can you prove that a piece of knowledge hasn't been tampered with or corrupted since it was recorded? Supply chains solve this with chain-of-custody documentation. Financial systems solve it with reconciliation and audit. AI memory systems currently solve it with... nothing.

- Governance as structure, not afterthought. Who decides what gets remembered? What are the retention and retirement policies? When knowledge conflicts, what's the resolution mechanism? These aren't nice-to-have features. They're the difference between a knowledge system and a knowledge landfill.

None of this is conceptually new. What's new is that we're building AI systems that accumulate institutional knowledge at unprecedented speed — and we're doing it without any of the infrastructure that every previous institutional knowledge system required.

What We've Seen in Practice

We've been running persistent human-AI collaboration across more than 170 sessions and 39 projects. We've experienced the trust chain problem from the inside. Some examples:

- The confident citation of deprecated knowledge. An AI assistant referenced an architecture decision from two months prior — correctly quoting the original reasoning — without knowing that a subsequent session had explicitly reversed the decision. The original entry was still in the knowledge base. The reversal was too. Nothing connected them.

- The invisible conflict. Two separate sessions produced knowledge entries that directly contradicted each other — one from a design session, one from a post-incident review. Both were valid at the time of writing. Neither was flagged as superseding the other. The next agent to search the topic retrieved whichever chunk happened to score higher on vector similarity.

- The provenance black hole. We found knowledge entries where we couldn't determine whether a claim was based on external research, first-hand experience, or an AI's inference during a brainstorming session. The content was there. The confidence level was absent. An AI treating a speculative brainstorm with the same weight as a verified deployment log is a system that doesn't understand what it knows.

- The stale fact that kept compounding. A piece of infrastructure documentation remained in the knowledge base after the infrastructure was replaced. Over several sessions, other knowledge built on top of it. By the time we caught it, the stale fact had influenced decisions across three projects. The error didn't stay contained — it propagated through the knowledge graph like an undetected accounting error propagates through dependent ledgers.

Principles That Helped

We don't have a universal solution. But we've found that certain principles, applied consistently, dramatically reduce the trust problem:

- Timestamp everything, twice. When was this knowledge created? When was it last verified as current? The gap between those two dates is your staleness risk. A fact verified yesterday is more trustworthy than a fact recorded last year and never revisited — regardless of how precisely the original was written.

- Record what failed alongside what succeeded. Negative knowledge — "we tried X and it caused Y" — is as valuable as positive knowledge. Without it, you're not just missing information. You're missing the guardrails that prevent repeat mistakes. This is the most commonly overlooked form of institutional knowledge.

- Relationships matter as much as facts. A decision recorded in isolation is a fact. A decision connected to its context — the problem it solved, the alternatives considered, the constraints that shaped it — is understanding. When knowledge entries link to their antecedents and consequences, stale facts become detectable because their connections to superseding entries make the timeline visible.

- Treat verification as a continuous process, not a one-time event. Knowledge doesn't stay accurate just because it was accurate when stored. Regular integrity checks — does this still match reality? — are the knowledge equivalent of financial reconciliation. They catch drift before it causes damage.

Trust Is Infrastructure

The deeper pattern here isn't about AI specifically. It's about how societies handle institutional knowledge. Every time a new domain starts accumulating knowledge at scale, it goes through the same evolution:

Phase 1: Store everything. The priority is not losing information. File it, save it, dump it somewhere. This is where most AI memory systems are today.

Phase 2: Retrieve efficiently. The priority shifts to finding the right information quickly. Better indexing, better search, better relevance ranking. This is where the AI memory market is competing — faster retrieval, better embeddings, smarter search.

Phase 3: Govern what's stored. The priority shifts again — to accuracy, provenance, integrity, and lifecycle management. This is where financial accounting is. Where medical records are. Where supply chain management is. This is where AI memory has not yet arrived.

You can't skip Phase 3. Every domain that tried to scale institutional knowledge without governance eventually hit a trust crisis — Enron in finance, contaminated records in healthcare, counterfeit products in supply chains. The crisis always forced the governance investment that should have been made from the beginning.

AI memory is still in the pre-crisis phase. The question isn't whether trust infrastructure will be needed. It's whether we build it proactively or reactively — before or after the first high-profile failure of AI-assisted institutional memory.

Open Questions

We think the right questions are becoming clearer, even if the answers aren't:

- Who governs AI memory at organizational scale? When your AI accumulates knowledge across hundreds of sessions and dozens of users, who's responsible for its accuracy? Is it the users who contributed the knowledge? The team that deployed the system? The vendor? We don't have good answers yet — and "nobody" is the default in most organizations today.

- What happens when AI memories conflict? If two teams store contradictory knowledge in the same system, what's the resolution mechanism? Currently: the retrieval algorithm picks the higher-scoring chunk. That's not governance. That's a coin flip weighted by embedding proximity.

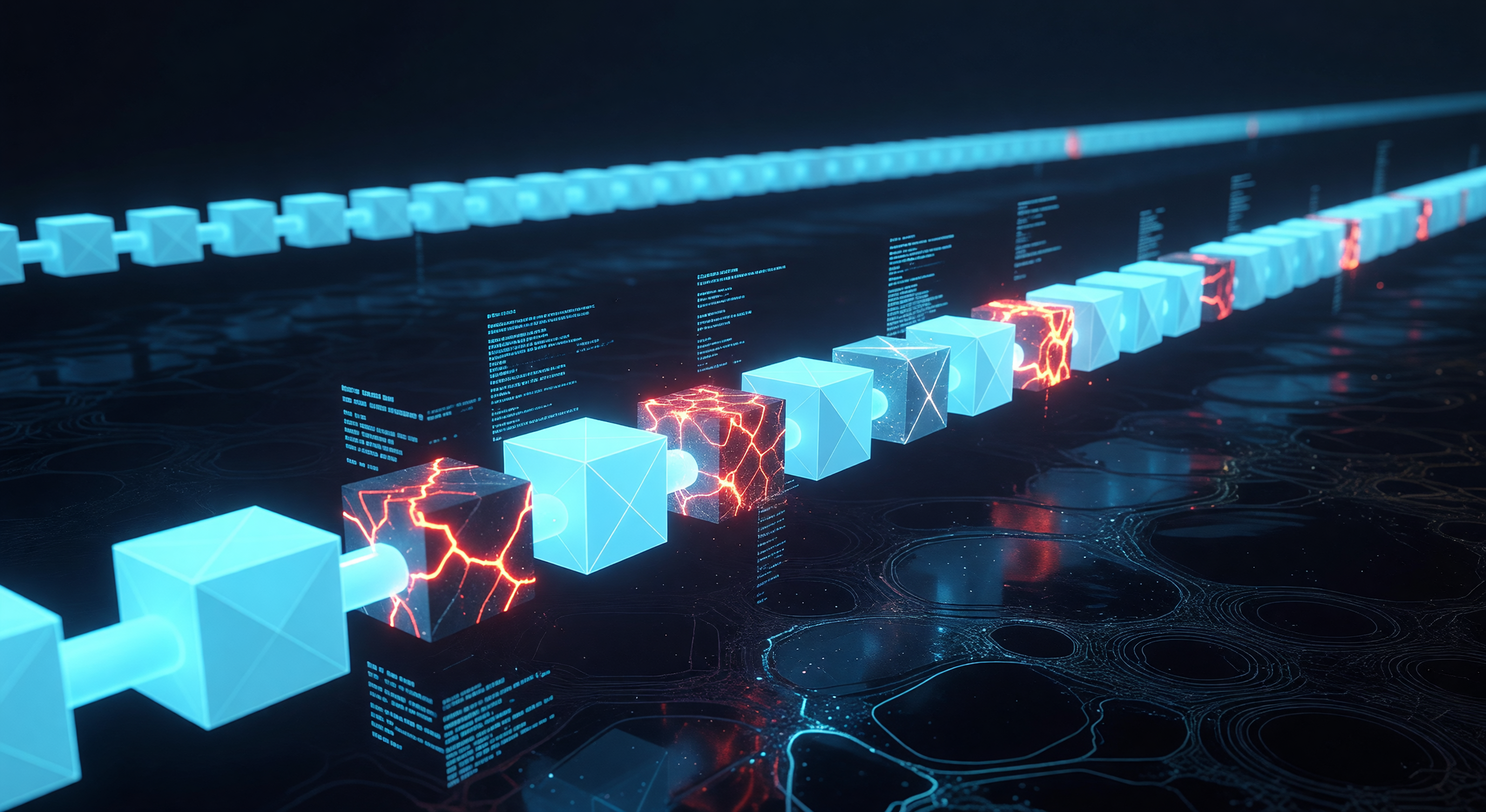

- Can we build trust without centralization? The obvious answer to governance is to centralize control. But centralization creates bottlenecks and single points of failure. The more interesting question is whether distributed trust — the kind that blockchains demonstrated for financial transactions — can work for institutional knowledge.

- Is "AI readiness" meaningful without trust infrastructure? If an organization has adopted AI tools, trained its staff, and connected its data — but has no provenance tracking, no temporal validity, no integrity verification, and no governance framework for AI-generated knowledge — is it AI ready? Or has it built a house on sand?